Improved chatbot customer experience: sleep() is all you need

What AI chatbot developers can learn from game developers about human psychology

Time-to-resolution is a key metric in customer service, which is why there is a lot of interest in improving chatbots. But if a chat interface is a piece of string, it has two ends, and only one end is a computer. Until we get to the point where we have AI talking to AI and we’ve completely removed the human, one end of that experience is a human being. That means to optimize time to resolution, the human and the machine have to be in harmony.

Chatbot metrics and incentives

Both ends of the chat experience have a common incentive: they both want to minimize time-to-resolution. But this incentive alignment is imperfect because there is another incentive: cost minimization. As the human on the other end of the line, I know I am 100% committed to reducing time-to-resolution because my cost is my time, but the cynic in me knows that the business I’m speaking to is also laser focussed on reducing labour cost, and that is not necessarily perfectly aligned to time-to-resolution - we’ve all been on hold.

That’s why we speak to an AI-powered chatbot at the start of the interaction. The business hopes I won’t need a human. How do I know I’m speaking to AI anyway, aren’t LLMs good enough to pass a customer-service limited variation of a Turing test? Does the model just need more fine-tuning on QA pairs?

I believe that it will be possible to QLoRA a model to the point where it is indistinguishable from your offshore customer service team, but there is another tell which I just observed first hand on the AMEX chat app. (Aside: can we make QLoRA a verb yet?)

Why do I care if I have to speak to an AI in the beginning? To put it simply, there are times when I want the AI to just get out of the way and let me speak to a person because I believe that will be the quickest way to resolve my issue.

So how did I know my chat interaction started with a chatbot? Simple. It was too fast.

Time-to-resolution is an end-to-end metric, but a simplistic approach of reducing completion latency may not necessarily yield an end-to-end improvement in time-to-resolution if it elicits an undesirable response from the human, such as attempts to escalate to a human customer service representative. So what can we do?

Normal humans don't always feel the need for speed.

Rate-limiting LLM completion time to improve time-to-resolution

As bizarre as it may sound, why not just slow down?

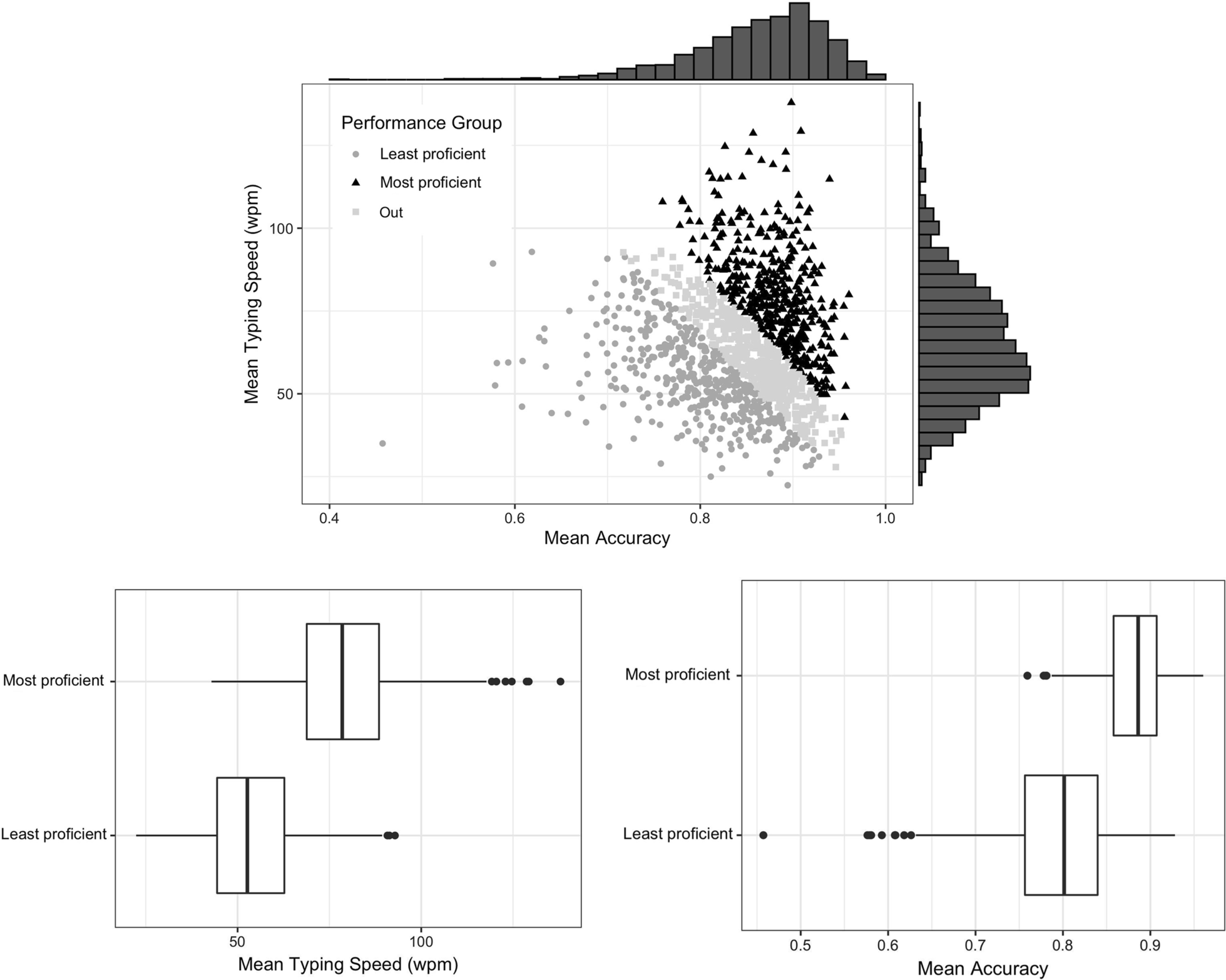

The intuition we humans have (from years on WhatsApp and Teams watching “so and so is typing…” indicators) is that people need a moment to write a message. There is data we can use for this, such as a study by Pinet et al.. Their dataset is from a student population, which probably isn’t a bad proxy for customer support workers, assuming French keyboards don’t make a huge difference. On that basis we may assume that 100 words per minute is a realistic rate for a high-performance human customer support worker.

Pinet, S., Zielinski, C., Alario, FX. et al. Typing expertise in a large student population. Cogn. Research 7, 77 (2022). https://doi.org/10.1186/s41235-022-00424-3

For simplicity let’s assume full message transmission, none of that character-by-character streaming that ChatGPT made mainstream. An implementation of this idea may look like this:

def required_sleep_time_s_for_message(message: str, words_per_minute: int):

words = len(str(message).split())

return words / words_per_minute * 60

start_time = time.time()

response = self.agent.chat(prompt)

completion_time = time.time() - start_time

sleep_time = required_sleep_time_s_for_message(response, 100) - completion_time

if sleep_time > 0:

time.sleep(sleep_time)

return responseGiven the amount of implementation effort required, I’d suggest this approach would be worth an AB-test for any chatbot application.

Delaying AI responses to increase human comfort

Back-pressure propagation and rate-limiting are well established queuing practices in high-performance system design, but inserting an artificial delay when generating a response for a human may seem like unrefined deception.

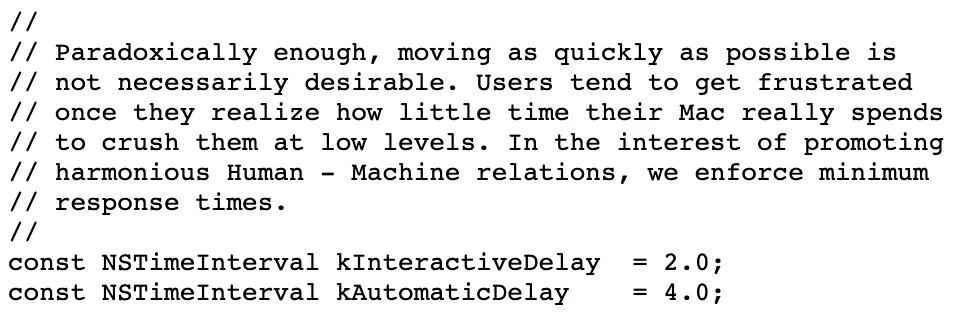

However, if this approach seems too crude or regressive, it should be noted that making the AI go slow for the sake of human comfort is not a novel suggestion. This has been established practice in the gaming industry for years. This code comment from Apple’s Chess program source code sums it up pretty well:

Patronizing, yet pleasingly harmonious?

Resistance to this idea is understandable; delaying an answer to a customer query seems like it must be an anti-pattern. After all, Google has taught us that the righteous and profitable path is to give people the information they want as rapidly as possible.

The nuance here is that chat is an experience that is unlike search. The human has already tried Google and that has failed to resolve their problem. Now they are reaching out for a chat experience because they want to be heard. Active listening requires patience.

So, for the sake of harmonious Human-Machine relations, perhaps we can make the AI sleep a little.